Reinforcement Learning: Teaching Machines Through Trial and Error

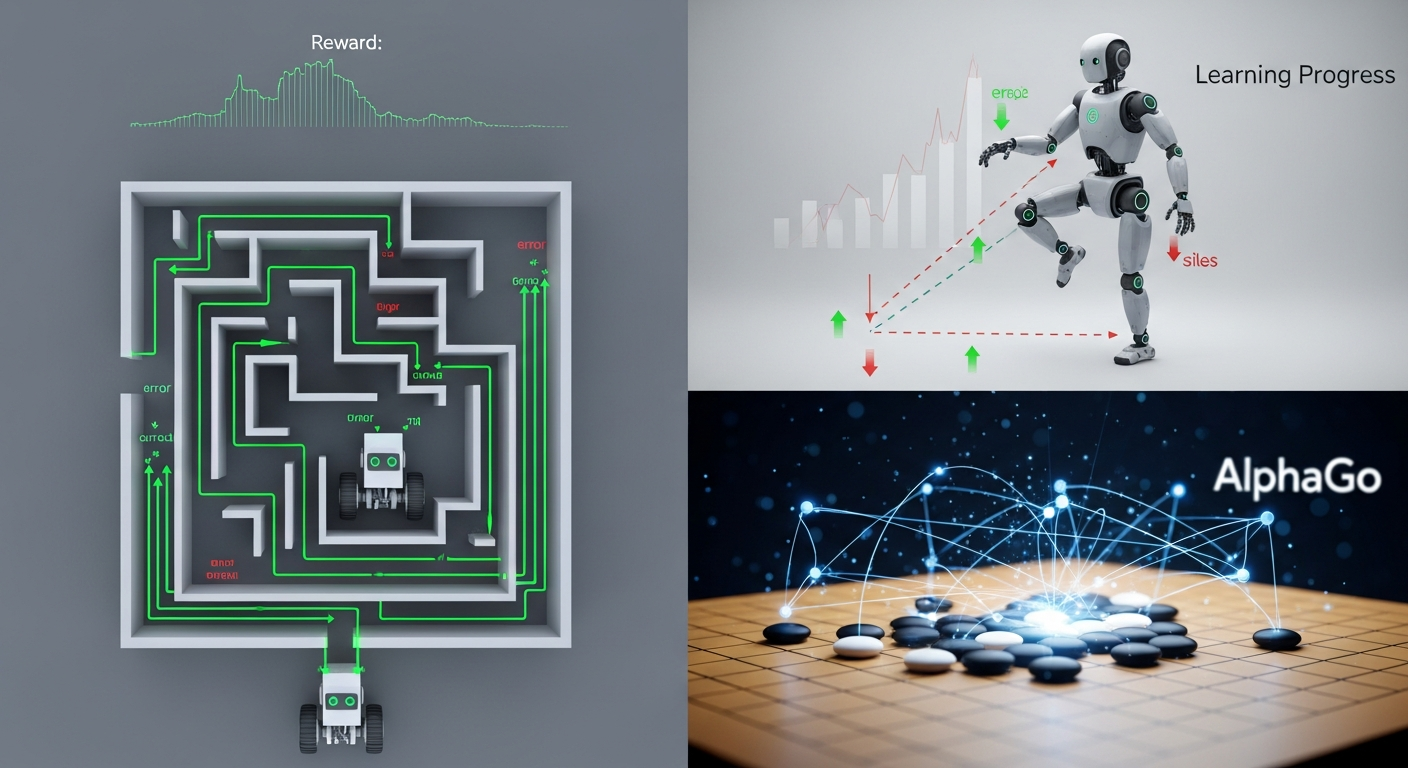

Instead of labeled data, reinforcement learning agents learn by interacting with an environment and collecting rewards. It's how AlphaGo mastered Go and robots learn to walk.

Think about how you learn to ride a bike. No one gives you a manual. No one tells you exactly how to balance, how to pedal, how to turn. You get on, you try, you fall. You try again, you fall a little less. Over time, through trial and error, you learn. You learn what works and what doesn't. You learn from the consequences of your actions.

Reinforcement learning (RL) is the branch of machine learning that captures this kind of learning. Instead of being told the right answer, an RL agent learns by interacting with an environment, taking actions, and receiving rewards or penalties. The goal is to learn a strategy—called a policy—that maximizes the total reward over time.

The Reinforcement Learning Loop

Reinforcement learning has a simple but powerful structure:

- The agent is in a state.

- The agent takes an action.

- The environment transitions to a new state.

- The agent receives a reward (positive or negative).

- Repeat.

The agent's goal is to learn a policy—a mapping from states to actions—that maximizes the total reward over time. It doesn't get told the right action. It has to figure it out by trying things and seeing what happens.

This is fundamentally different from supervised learning. In supervised learning, you have a dataset of correct examples. The model learns to imitate those examples. In reinforcement learning, there are no correct examples. The agent has to discover what works through exploration.

Exploration vs. Exploitation

Reinforcement learning agents face a fundamental tradeoff: exploration vs. exploitation.

Exploitation means doing what you already know works. If you know that turning left gives a reward, you turn left. This gets you reward now, but you might miss out on discovering something even better.

Exploration means trying new things, even if they might not work. If you try turning right, you might discover a much bigger reward. Or you might get nothing. Exploration can lead to better long-term outcomes, but it comes at a short-term cost.

Balancing exploration and exploitation is one of the central challenges in reinforcement learning. Too much exploitation, and you might get stuck in a local optimum. Too much exploration, and you waste time trying things that don't work.

Key Concepts

Several key concepts are important for understanding reinforcement learning:

States and observations: The state is the complete description of the environment. In many real problems, the agent doesn't see the full state—it only gets observations. A self-driving car sees camera images and sensor readings, not the complete state of the world.

Actions: The actions are what the agent can do. In a video game, actions might be moving left, moving right, jumping, and shooting. In robotics, actions might be joint angles or motor commands.

Rewards: The reward is the feedback signal. It tells the agent whether what it did was good or bad. Rewards can be immediate or delayed. In chess, the only reward comes at the end—win or lose. Everything before that is about setting up the win.

Policy: The policy is the agent's strategy. It maps states to actions. It might be a simple table, a complex neural network, or something in between.

Value function: The value function estimates how good a state is. It's the total reward the agent can expect from that state, assuming it follows its policy. This helps the agent choose actions that lead to good states, even if the immediate reward is small.

How Reinforcement Learning Works

There are several main approaches to reinforcement learning:

Value-based methods: These methods learn the value function directly. The agent estimates how good each state is, then takes actions that lead to high-value states. Q-learning is a classic example. It learns the value of taking each action in each state, then chooses the action with the highest value.

Policy-based methods: These methods learn the policy directly. Instead of learning values and then choosing actions, they learn a function that maps states directly to actions. Policy gradient methods adjust the policy in the direction that increases expected reward.

Actor-critic methods: These combine both approaches. The "actor" is the policy that chooses actions. The "critic" is the value function that evaluates how good those actions are. The actor learns from the critic's feedback.

Model-based methods: These methods learn a model of the environment. If you know how the world works, you can plan. You can imagine different sequences of actions, predict what will happen, and choose the best one. Model-based methods are more sample-efficient but harder to learn.

What Reinforcement Learning Can Do

Reinforcement learning has achieved remarkable results:

Game playing: RL agents have mastered games from Atari to Go to StarCraft. DeepMind's AlphaGo defeated the world champion at Go—a game considered too complex for computers just a decade earlier. AlphaZero learned to play chess, shogi, and Go from scratch, beating the best specialized programs in all three.

Robotics: RL is used to teach robots to walk, grasp objects, manipulate tools, and perform complex tasks. Robots learn through trial and error, often in simulation before being deployed in the real world.

Resource management: RL is used to manage data centers, reducing energy consumption by learning when to turn servers on and off. It's used in inventory management, learning how much to stock to meet demand while minimizing costs.

Personalization: Recommendation systems use RL to learn what content to show users to maximize engagement over time.

Autonomous vehicles: Self-driving cars use RL to learn driving policies—when to accelerate, brake, and steer—through simulation and real-world experience.

The Challenges

Reinforcement learning is powerful but has serious challenges:

Sample efficiency: RL agents often need millions or billions of interactions to learn. Humans can learn from just a few examples. Making RL more sample-efficient is a major research direction.

Reward design: Defining the right reward function is tricky. If the reward is wrong, the agent will optimize for something you didn't intend. A robot trained to move fast might learn to fall over and spin, which technically counts as moving fast.

Safety: In the real world, exploration is dangerous. A robot that's trying random actions in a factory could hurt someone. Making RL safe for real-world deployment is an active area of research.

Generalization: RL agents often don't generalize well. An agent trained on one level of a game might fail on a slightly different level. An agent trained in simulation might fail in the real world.

The Future

Reinforcement learning is advancing rapidly:

More sample-efficient algorithms: New methods learn from fewer interactions, making RL practical for more problems.

Better simulation: More realistic simulations allow RL agents to learn in the virtual world and transfer that knowledge to the real world.

Combining with other AI: RL combined with large language models enables agents that can understand instructions and goals in natural language.

Real-world deployment: More RL systems are being deployed in production—in recommendation systems, data center management, and robotics.

Conclusion

Reinforcement learning is a powerful approach to building intelligent systems. It doesn't require labeled data or human demonstrations. It just requires a clear goal—encoded in a reward function—and the ability to interact with an environment.

From game playing to robotics to resource management, reinforcement learning is already making an impact. As algorithms become more efficient and hardware becomes more powerful, RL will be applied to increasingly complex and important problems.

The idea is simple: try things, see what works, do more of what works. It turns out that this trial-and-error process—so natural to biological organisms—can be formalized mathematically and scaled up to create some of the most impressive AI systems ever built.