Natural Language Processing: Teaching Computers to Understand Human Language

From rule-based systems to transformer models, NLP has transformed how computers interact with human language—enabling translation, summarization, question answering, and more.

Language is perhaps the most human thing about us. We use it to share ideas, tell stories, make plans, express emotions. It's how we think, how we connect, how we build civilization.

For computers, language is hard. Human language is messy, ambiguous, and constantly evolving. The same words can mean different things in different contexts. The same idea can be expressed in countless ways. Sarcasm, humor, and emotion add layers of meaning that aren't in the words themselves.

Natural Language Processing (NLP) is the field of artificial intelligence that tries to bridge this gap. It aims to teach computers to understand, interpret, and generate human language in ways that are useful.

Why Language Is Hard for Computers

To appreciate what NLP does, we need to understand why language is so challenging.

Ambiguity: The word "bank" can mean a financial institution, the side of a river, or the act of tilting an airplane. The sentence "I saw her duck" could mean I saw the duck she owns, or I saw her lower her head.

Context: "That was sick!" could be an insult or a compliment, depending on whether you're talking about a meal or a skateboard trick.

Structure: Language has complex grammatical structures. Words can be arranged in countless ways to express similar ideas. "John hit Mary" and "Mary was hit by John" mean the same thing but have different structures.

Knowledge: Understanding language often requires world knowledge. If someone says "I'm going to the bank," you know they mean the financial institution, not the river bank, because people don't typically go to river banks as a destination.

The Evolution of NLP

NLP has gone through several phases as researchers tried different approaches to these challenges.

Rule-based systems: The earliest NLP systems used hand-coded rules. They had dictionaries of words, grammar rules, and knowledge bases. If the rules covered what you wanted to do, the system worked. If they didn't, it failed. These systems were brittle and didn't scale.

Statistical NLP: In the 1990s and 2000s, NLP shifted to statistical methods. Instead of writing rules, researchers built models that learned patterns from large amounts of text. Machine translation systems learned from millions of translated sentences. Speech recognition systems learned from thousands of hours of transcribed audio. This was a big improvement, but the models were still limited.

Deep Learning NLP: Starting around 2013, deep learning revolutionized NLP. Instead of hand-crafted features, neural networks learned representations directly from text. Instead of separate models for different tasks, the same architectures could be adapted to many tasks. This is the era we're in now.

Word Embeddings: The Foundation

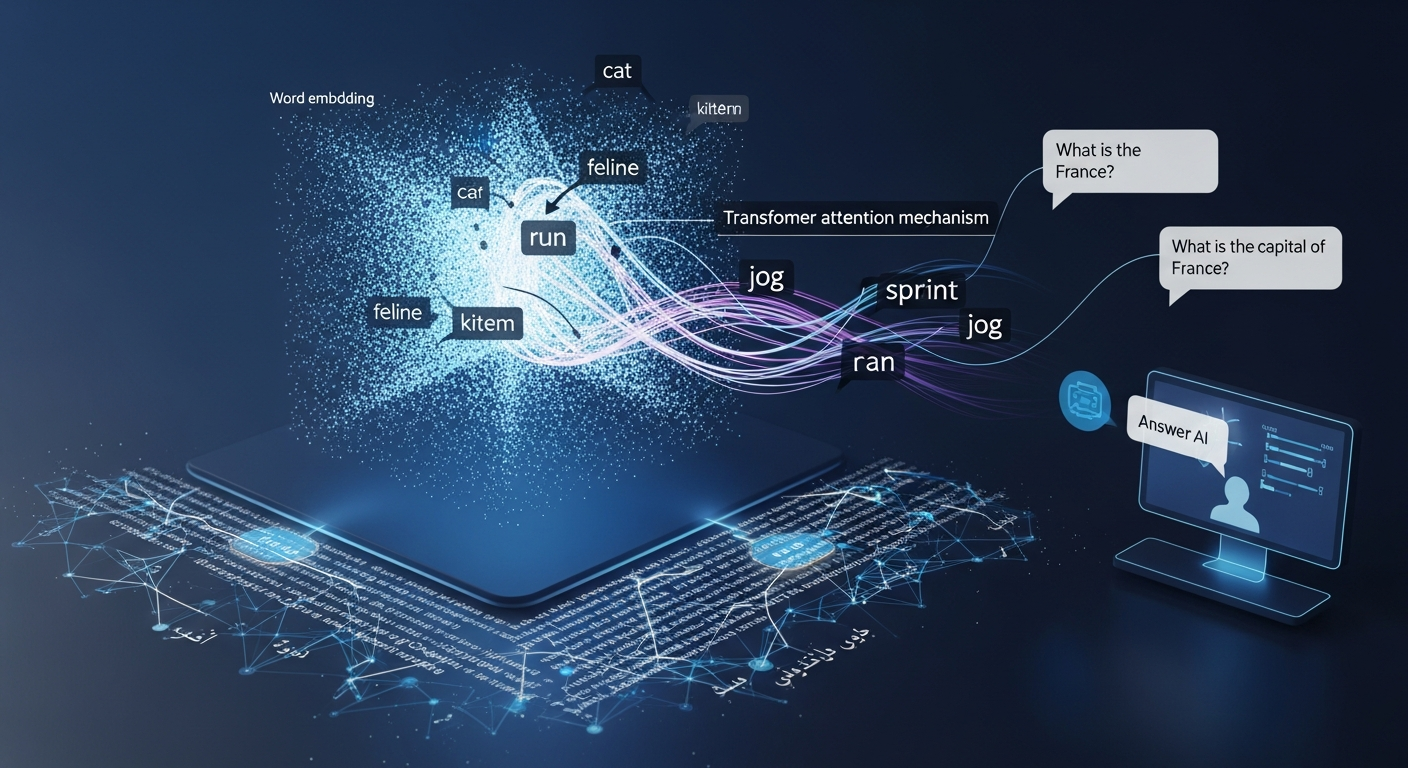

One of the key ideas in modern NLP is word embeddings. Instead of representing words as discrete symbols, embeddings represent them as vectors—lists of numbers—in a high-dimensional space.

The magic is that the geometry of this space captures meaning. Words that are similar have similar vectors. "King" and "queen" are close. "King" and "apple" are far apart. Even more interesting, relationships are preserved. The vector difference between "king" and "man" is similar to the vector difference between "queen" and "woman."

These embeddings are learned from large amounts of text. The model looks at which words appear near each other. Words that appear in similar contexts get similar embeddings. This simple idea captures an enormous amount of linguistic structure.

Transformers: The Current Revolution

The biggest breakthrough in NLP in recent years is the transformer architecture. Introduced in 2017, transformers use a mechanism called "attention" to process language in a fundamentally different way.

Earlier models processed language sequentially—left to right, word by word. Transformers look at all words simultaneously. Each word can attend to every other word, capturing relationships regardless of distance. This allows transformers to understand context in ways that earlier models couldn't.

Transformers also scale beautifully. You can make them bigger—more layers, more attention heads, more parameters—and they keep getting better. This scaling has produced models like GPT and BERT that have transformed what NLP can do.

What NLP Can Do Today

Modern NLP systems can perform an impressive range of tasks:

Machine translation: Systems can translate between hundreds of languages with near-human accuracy. The same system can translate text, speech, and even images.

Text summarization: Given a long article, NLP systems can produce a concise summary that captures the key points.

Question answering: You can ask a system a question about a document, and it will find the answer. More advanced systems can answer questions using knowledge drawn from the entire internet.

Sentiment analysis: Systems can detect the sentiment in text—positive, negative, neutral—and even identify specific emotions like joy, anger, or sadness.

Named entity recognition: Systems can identify names of people, organizations, locations, dates, and other entities in text.

Text generation: Language models can generate coherent, fluent text on almost any topic. They can write emails, articles, poetry, and even computer code.

Conversational AI: Chatbots and voice assistants can carry on natural conversations, answer questions, and perform tasks.

The Challenge of Understanding

Despite these advances, it's important to recognize what NLP systems actually do—and what they don't do.

Modern NLP systems are incredibly good at pattern matching. They've seen billions of sentences and learned the statistical patterns of language. They can produce text that sounds fluent and intelligent. But do they understand what they're saying? That's a deeper question.

Most researchers agree that current NLP systems don't understand language the way humans do. They don't have beliefs, intentions, or a model of the world. They're sophisticated pattern matchers, not thinking machines.

This distinction matters. When you use a language model to answer a question, you're not talking to something that understands. You're interacting with a system that has learned to predict what words are likely to follow other words. The fact that this produces useful answers is remarkable, but it's not understanding in the human sense.

The Future of NLP

NLP is advancing rapidly. Researchers are working on:

Multimodal models: Systems that understand language, images, audio, and video together. These models can do things like watch a video, listen to the audio, and answer questions about what happened.

Reasoning: Current models are good at pattern matching but less good at reasoning. Researchers are working on models that can perform multi-step reasoning, use tools, and verify their own answers.

Efficiency: Large language models are enormous and expensive to run. Researchers are developing smaller, more efficient models that can run on phones and edge devices.

Safety and alignment: Language models can generate harmful content. Researchers are working on making them safer, more truthful, and better aligned with human values.

Conclusion

Natural Language Processing has come a long way from rule-based systems and simple statistical models. Today's systems can translate languages, summarize documents, answer questions, and generate coherent text. They're already transforming how we interact with computers and access information.

But the field is still young. The gap between what NLP systems can do and what humans can do remains vast. Closing that gap will require not just bigger models and more data, but deeper understanding of what language is, how it works, and what it means to truly understand.