Machine Learning Explained: How Computers Learn Without Being Explicitly Programmed

Instead of following explicit rules, machine learning models discover patterns from data. This simple idea is powering the AI revolution across every industry.

Every time your email spam filter catches a junk message, every time your phone recognizes your face, every time Netflix recommends a show you actually want to watch—machine learning is at work.

Machine learning is a branch of artificial intelligence where computers learn from data rather than following explicit instructions. Instead of writing code that says "if the email contains the word 'viagra', mark it as spam," we give the computer thousands of examples of spam and not-spam, and let it figure out the patterns itself.

This might seem magical, but it's actually a simple idea with profound implications.

The Traditional Programming Paradigm

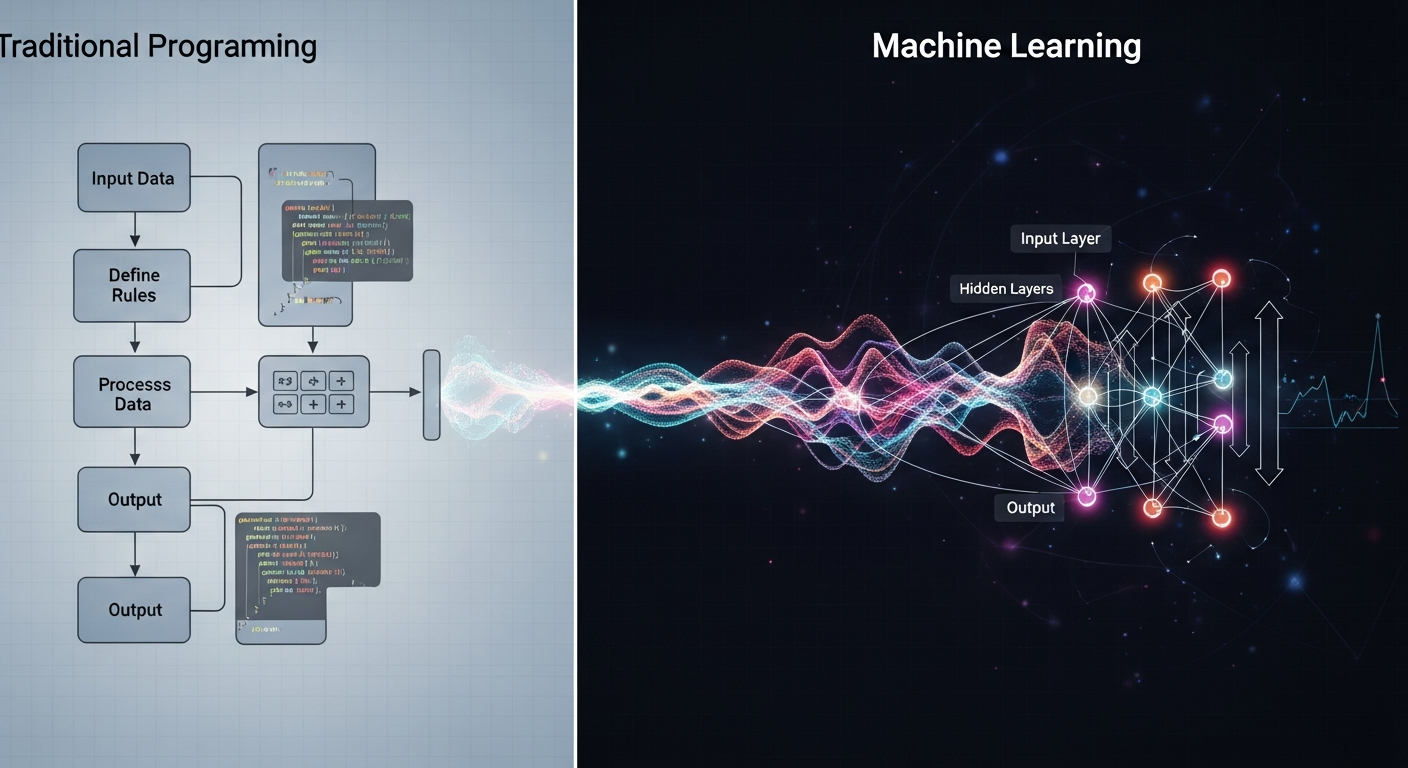

To understand machine learning, it helps to understand how traditional programming works.

In traditional programming, a human writes explicit instructions. The instructions say: given this input, do this, then do that, then output this. The program follows these instructions exactly. If the instructions are correct, the program works. If the instructions are wrong, the program fails.

This approach works well for problems we understand completely. If you want to compute the sum of two numbers, you can write explicit instructions. If you want to sort a list, you can write an algorithm that sorts it.

But many problems don't lend themselves to explicit instructions. How do you write code that recognizes a cat in a photo? How do you write instructions for translating a sentence from English to Turkish? How do you write a program that predicts tomorrow's weather?

These problems are too complex for explicit rules. There are too many exceptions, too many edge cases, too much ambiguity. This is where machine learning comes in.

How Machine Learning Works

Machine learning flips the traditional programming model. Instead of a human writing rules for the computer to follow, the computer discovers the rules from examples.

The process has three main components:

Data: You need examples to learn from. If you want to build a spam filter, you need thousands of emails labeled as "spam" or "not spam." If you want to build a cat recognizer, you need thousands of photos labeled "cat" or "not cat."

Model: The model is a mathematical structure that can learn patterns from data. It starts with random guesses. As it sees more examples, it adjusts its internal parameters to make better predictions.

Learning algorithm: This is the procedure that updates the model based on the data. It compares the model's predictions to the actual labels and calculates how wrong the model is. Then it adjusts the model to be less wrong next time.

The key insight is that this process can work for almost any problem where you have enough labeled examples. The model doesn't need to understand the problem. It just needs to find statistical patterns in the data.

Types of Machine Learning

Machine learning comes in several flavors, depending on the kind of data you have and what you want to do.

Supervised learning: This is the most common type. You have input data and corresponding output labels. You want the model to learn to predict the label from the input. Spam filtering, image recognition, and house price prediction are all supervised learning problems.

Unsupervised learning: Here, you have input data but no labels. You want the model to find structure in the data on its own. Clustering similar customers together, finding topics in documents, and detecting anomalies are examples of unsupervised learning.

Reinforcement learning: In reinforcement learning, an agent learns by interacting with an environment. It takes actions, receives rewards or penalties, and learns to maximize its total reward over time. This is how AlphaGo learned to play Go, and how robots learn to walk.

The Deep Learning Revolution

For decades, machine learning was limited by the quality of the "features"—the human-designed ways of representing data. If you wanted to recognize faces, you had to design algorithms that detected edges, corners, and facial features. This was tedious and often ineffective.

Deep learning changed everything. Deep learning models—neural networks with many layers—learn the features themselves. They start with raw data like pixels or words and automatically discover the hierarchical patterns that matter. The first layer might detect edges. The second layer might detect shapes. The third layer might detect parts of faces. The fourth layer might detect whole faces.

This automatic feature learning is what makes deep learning so powerful. It can work with raw data directly, without hand-engineering features for each new problem.

Why Machine Learning Works Now

Machine learning has been around for decades. So why is it suddenly everywhere?

Three factors came together in the 2010s to make modern machine learning possible:

Data: The internet created massive datasets. Billions of photos, trillions of words, endless streams of user behavior. Machine learning thrives on data.

Compute: Graphics processing units (GPUs) originally designed for video games turned out to be perfect for training neural networks. A modern GPU can do trillions of operations per second.

Algorithms: Researchers developed new techniques that made deep networks trainable. Techniques like dropout, batch normalization, and better activation functions solved problems that had held back neural networks for decades.

Limitations and Challenges

Machine learning is powerful, but it's not magic. It has important limitations:

Data hungry: Most machine learning models need vast amounts of labeled data. Collecting and labeling that data is expensive and time-consuming.

Brittle: Machine learning models can fail in unexpected ways. A self-driving car trained on sunny roads might fail in snow. A face recognition system trained on one population might fail on another.

Black box: It's often hard to understand why a model made a particular decision. This is a problem for high-stakes applications like medicine or criminal justice.

Bias: Models learn from historical data. If that data contains bias, the model will learn that bias. A hiring model trained on historical hiring data might learn to discriminate against women if the historical data was biased.

The Future

Machine learning continues to advance rapidly. Models are becoming more capable, more efficient, and more reliable. Researchers are working on models that learn from less data, that explain their decisions, and that can adapt to new situations without retraining.

As machine learning becomes more powerful, it will touch every part of our lives. From healthcare to education to transportation to entertainment, machine learning will be the engine that powers the next generation of intelligent applications.

But the fundamental idea remains the same: instead of telling computers exactly what to do, we give them examples and let them learn. It's a simple shift in perspective that has turned out to be extraordinarily powerful.